Goroutines: The Power Behind Go's Concurrency

Understanding Goroutines: Key to Go's Concurrent Strength

If you’ve ever worked with Java threads, Python’s multiprocessing, or JavaScript async/await, you know that concurrency can be tricky.

It’s either too complex, too heavy, or too unpredictable.

But then comes Go, with a fresh take—simple, efficient, and elegant concurrency.

The magic ingredient? Goroutines.

In this post, we’ll break down how goroutines work, how they differ from threads or processes, and why Go’s approach is a game-changer for modern developers.

Before diving into goroutines, let's first understand multithreading and multiprocessing. I have created a separate blog for this, so check it out before moving forward.

👉 Multiprocessing vs Multithreading: Understanding Concurrency and Parallelism

If you've read that, let's move on to goroutines now.

What Are Goroutines?

A goroutine is a function that runs at the same time as other functions. You start one by adding the go keyword before a function call.

Example:

package main

import (

"fmt"

"time"

)

func printMessage(msg string) {

for i := 1; i <= 3; i++ {

fmt.Println(msg,i)

time.Sleep(500 * time.Millisecond)

}

}

func main() {

go printMessage("Goroutine") // run concurrently

printMessage("Main Function")

}

Output (order may vary)

Main Funtion 1

Goroutine 1

Main Function 2

Goroutine 2

Main Function 3

Goroutine 3

What’s happening here?

The main function runs in the "main goroutine."

go printMessage()creates a new concurrent goroutine.Both functions run concurrently — the interleaved output confirms that.

How Goroutines Differ From Multithreading in Other Languages

The core difference — who schedules work?

Threads (Java/C++/pthreads):

Typically, there is a 1:1 mapping between a language thread and an OS thread.

The operating system kernel schedules threads onto CPU cores using its scheduler.

Creating a thread is relatively expensive — it involves memory and kernel bookkeeping.

The OS decides when to run, pause, or preempt each thread.

Goroutines:

Go runtime uses a sophisticated work-stealing scheduler (M:N model) to distribute goroutines efficiently across the available CPU cores or OS threads maximising CPU utilisation and minimising the overhead of context switching.

The M:N model is a scheduling approach used by the Go runtime to efficiently manage many goroutines (M) with a smaller, fixed number of operating system threads (N).

In simple terms it’s like a team of highly adaptable workers (goroutines) sharing a fixed set of desks (OS threads) in an office.

Implication: Goroutines are extremely cheap to create and schedule compared to OS threads, allowing programs to scale to thousands or millions of concurrent tasks.

Memory cost and stacks

OS Threads:

Each thread usually reserves a relatively large stack — often 1MB or more depending on the platform.

Creating many threads can quickly exhaust memory.

Goroutines:

Start with a tiny stack — on the order of a few KB.

Stacks grow and shrink dynamically as needed; the runtime transparently allocates a larger stack and copies frames.

Because each goroutine consumes far less memory initially, creating thousands is feasible.

Why this matters: Goroutines let you model many independent tasks without paying huge memory costs per task.

Scheduling M:N work-stealing and GOMAXPROCS

The M:N scheduling model in Go (many goroutines onto fewer OS threads) can be easily understood using a chef and kitchen analogy.

Imagine a busy restaurant kitchen with:

Many Recipes/Orders (M) = Goroutines: There are potentially thousands of incoming food orders (goroutines), each representing a task that needs to be completed.

A Few Stoves/Cooking Stations (N) = OS Threads (CPU cores): The kitchen only has a limited number of physical cooking stations or stoves (OS threads) where actual cooking (CPU work) can happen simultaneously.

The Head Chef = The Go Runtime Scheduler: A highly efficient head chef manages the flow of orders and assigns them to the available cooking stations.

How the M:N Model Works

Assigning Orders: The head chef gives an order to one of the assistant chefs at an empty cooking station.

Handling “Waiting” (Blocking I/O): An assistant chef is working on a complex dish that requires 10 minutes to simmer unattended (a “blocking I/O” operation, like waiting for a database response).

The Efficient Switch: The head chef immediately tells that assistant chef to step aside while the food simmers. The head chef then gives a new order to that now-empty cooking station. The original assistant chef returns to the station only when the 10 minutes are up and the dish needs attention again.

Balancing Work: The head chef continuously monitors all stations, ensuring no station sits idle if there are orders waiting. If one station gets a backlog, the chef might even move orders to another, less busy station (work stealing).

What is Work-Stealing?

Imagine 4 chefs (P1, P2, P3, P4), each with their own queue of tasks (Gs).

If P1 finishes its work but P2 still has 20 tasks:

P1 takes some goroutines from P2’s queue to balance the load.

This keeps any CPU core from being idle.

In simpler words:

Idle Ps take work from busy Ps to keep the CPU fully utilized.

What is GOMAXPROCS?

GOMAXPROCS(n) sets how many Ps exist — i.e., how many OS threads can run Go code at the same time.

Default = number of CPU cores.

What is Preemption?

Imagine one task keeps running forever, like an infinite loop. Other tasks would never run unless Go can interrupt it. This is what preemption does: the scheduler interrupts a long-running goroutine to give others a turn. Because of this, no goroutine can hog the CPU forever.

Real-World Example — Concurrent Web Requests

package main

import (

"fmt"

"net/http"

)

func fetch(url string) {

resp, err := http.Get(url)

if err != nil {

fmt.Println("Error fetching:", url)

return

}

defer resp.Body.Close()

fmt.Println(url, "->",resp.Status)

}

func main() {

urls := []string{

"https://golang.org",

"https://google.com",

"https://github.com",

}

for _, url := range urls {

go fetch(url) // fetch all concurrently

}

fmt.Scanln() // block main goroutine

}

https://golang.org -> 200 OK

https://google.com -> 200 OK

https://github.com -> 200 OK

All three requests run concurrently

The program doesn't wait for one request to finish before starting another.

This is Go’s concurrency power — simple syntax, massive performance gain.

Let’s understand this code using a kitchen analogy.

In this analogy:

main()function: The owner of the restaurant.fetch()function: A detailed recipe for preparing one specific dish (fetching a URL).go fetch(url): Handling an order ticket to the head chef and asking for a dish to be prepared immediately.The websites (golang.org, etc.): Suppliers with whom the kitchen must communicate.

fmt.Scanln(): The restaurant owner waiting patiently at the counter until all orders are confirmed complete.

Setting Up the Restaurant (The

mainfunction start)func main() { urls := []string{ "https://golang.org", "https://google.com", "https://github.com", } // ... }The restaurant owner (the

mainfunction) writes down three specific orders on tickets: one forgolang.orgsoup, one forgoogle.comsalad, and one forgithub.comsteak.Handling Off Orders to the Kitchen (The

gokeyword)for _,url := range urls { go fetch(url) // fetch all concurrently }The owner takes all three order tickets and hands them to the Head Chef (the Go Runtime Scheduler) simultaneously: “Start cooking all of these now!”

The

gokeyword is crucial here. It tells the head chef to use the M:N scheduling model:Each

fetch(url)call becomes a separate recipe/order (goroutine).The head chef immediately assigns these recipes to the available cooking stations (OS threads) in the kitchen.

Preparing a Single Dish (The fetch function)

func fetch(url string) { resp, err := http.Get(url) // ... }This is where the magic of the M:N model happens:

Calling the Supplier (

http.Get(url)): The assistant chef at a cooking station starts preparing the dish but immediately finds they need a specific ingredient from a supplier (making a network request).The Head Chef Manages the Wait: The supplier is slow to respond (the network I/O is blocking). Instead of letting the assistant chef just stand there idly, blocking the entire cooking station, the Head Chef (Go Scheduler) switches them out.

Efficient Kitchen Use: The original assistant chef goes to wait by the back door for the supplier delivery. The cooking station they were using is immediately assigned to another recipe that is ready for CPU work. This keeps all stations busy!

Finishing the Dish (

fmt.Println(…)): Once the supplier arrives with the data, the assistant chef returns to an available station and confirms the order status.

(“https://golang.org“ →200 OK)

The Owner Waits for Confirmation (

fmt.Scanln())fmt.Scanln() // block main goroutineThis line is necessary because the restaurant owner (

mainfunction) needs to stay in the building until all three orders are confirmed and served.Without this line, the owner would hand the tickets to the chef and immediately leave the restaurant, shutting everything down before the dishes are even started. (We are not using

fmt.Scanln()here; there are other alternatives for this condition, which we will learn about later.)

In this analogy

| Analogy Component | Techinical Term |

| Recipes / Orders | Goroutines (M) |

| Cooking Stations/Stoves | OS Threads (N) |

| Head Chef / Expediter | Go Runtime Schedular |

And the assistant chefs are also part of the OS Threads (Cooking stations).

OS Thread is the combination of the physical cooking station (the hardware resource) and the assistant chef working at it (the execution mechanism)

The Head Chef (Go Runtime Schedular) is the management layer above the assistant chefs, telling them which recipes (goroutines) to work on.

Multiprocessing in Go

Go doesn’t provide true multiprocessing directly, but it achieves similar benefits using goroutines and OS threads. The Go runtime automatically schedules goroutines across multiple CPU cores using the GOMAXPROCS setting.

This means:

Go can run goroutines in parallel on different CPU cores.

The runtime handles thread creation, scheduling, and load balancing for you.

Developers get parallel execution without manually managing processes or threads.

So even though you don’t explicitly create “processes,” Go delivers multiprocessing-like performance through its efficient runtime scheduler.

FAQ Section

Are goroutines the same as threads?

No. Goroutines are not OS threads. They are lightweight functions managed by the Go runtime scheduler. Multiple goroutines run on top of a smaller set of OS threads.

How many goroutines can I run at once?

Usually hundreds of thousands or even millions, depending on RAM. Threads cannot scale anywhere close to this.

Does each goroutine use its own thread?

No. Goroutines are multiplexed onto a pool of worker threads using Go’s M:N scheduler. Multiple goroutines share a single thread (not simultaneously, but with fast switching).

Is Go single-threaded or multithreaded?

Go is multithreaded under the hood. Your program uses multiple OS threads automatically; you don’t need to manage them.

Are goroutines good for CPU-intensive tasks?

Yes, but you must understand that all CPU-bound goroutines compete for CPU cores. For heavy CPU work, increasing runtime.GOMAXPROCS() may help.

Do goroutines run in parallel?

If your machine has multiple CPU cores, then yes, goroutines run in parallel. If not, they run concurrently (taking turns), but still efficiently.

Why are goroutines so lightweight?

Because they use:

a tiny initial stack (2 KB).

dynamic stack resizing.

a user-space scheduler.

cooperative + async preemption.

Conclusion

Goroutines make concurrency in Go simple, lightweight, and incredibly scalable. Instead of dealing with heavy OS threads, Go gives you tiny, fast goroutines managed by an efficient runtime scheduler. This allows you to run thousands or even millions of concurrent tasks with minimal memory and almost no complexity.

What’s Next?

Now that goroutines are clear, the next step is understanding how they communicate and synchronize.

Up next, we’ll cover:

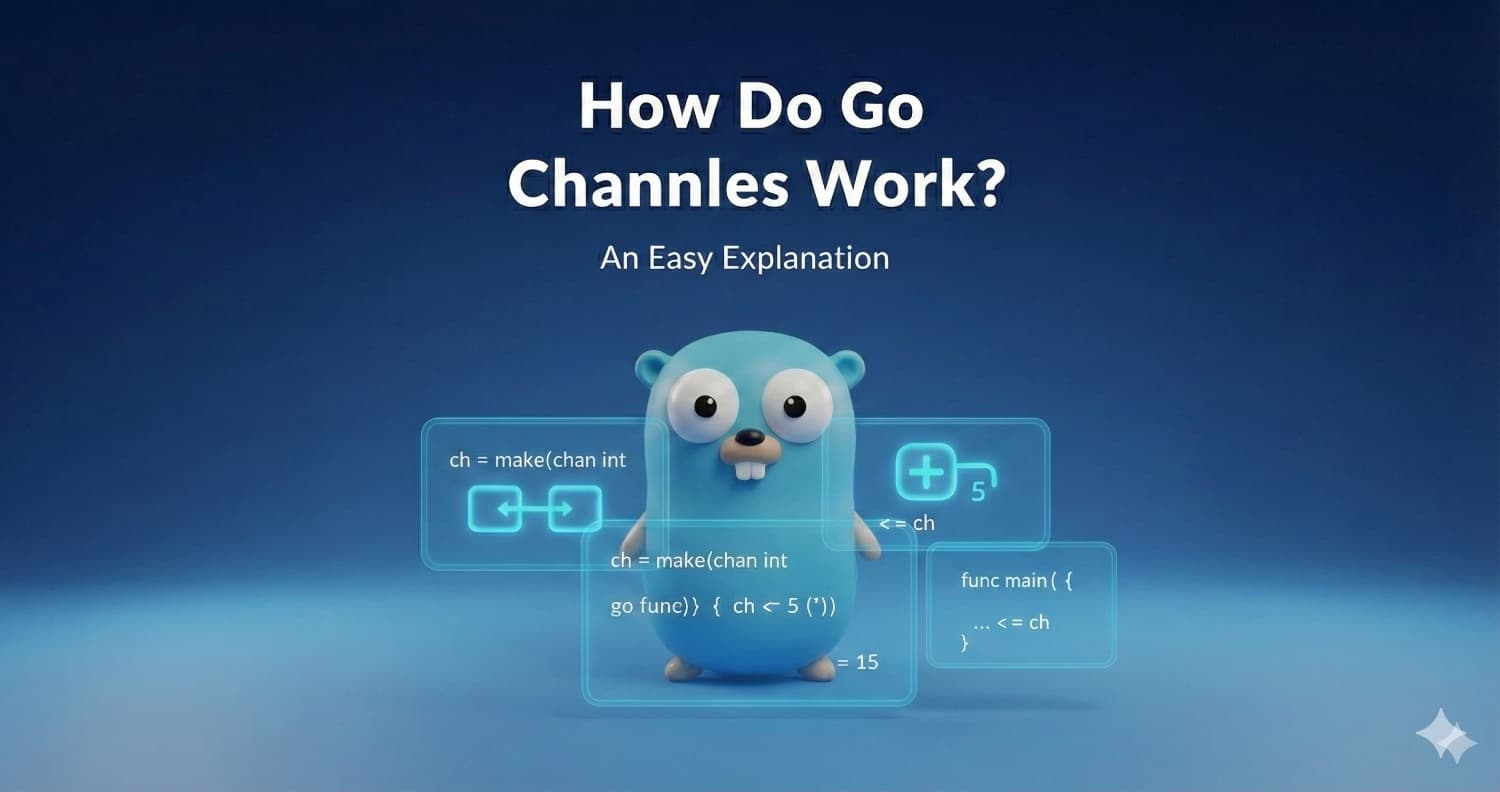

Channels - How goroutines safely exchange data

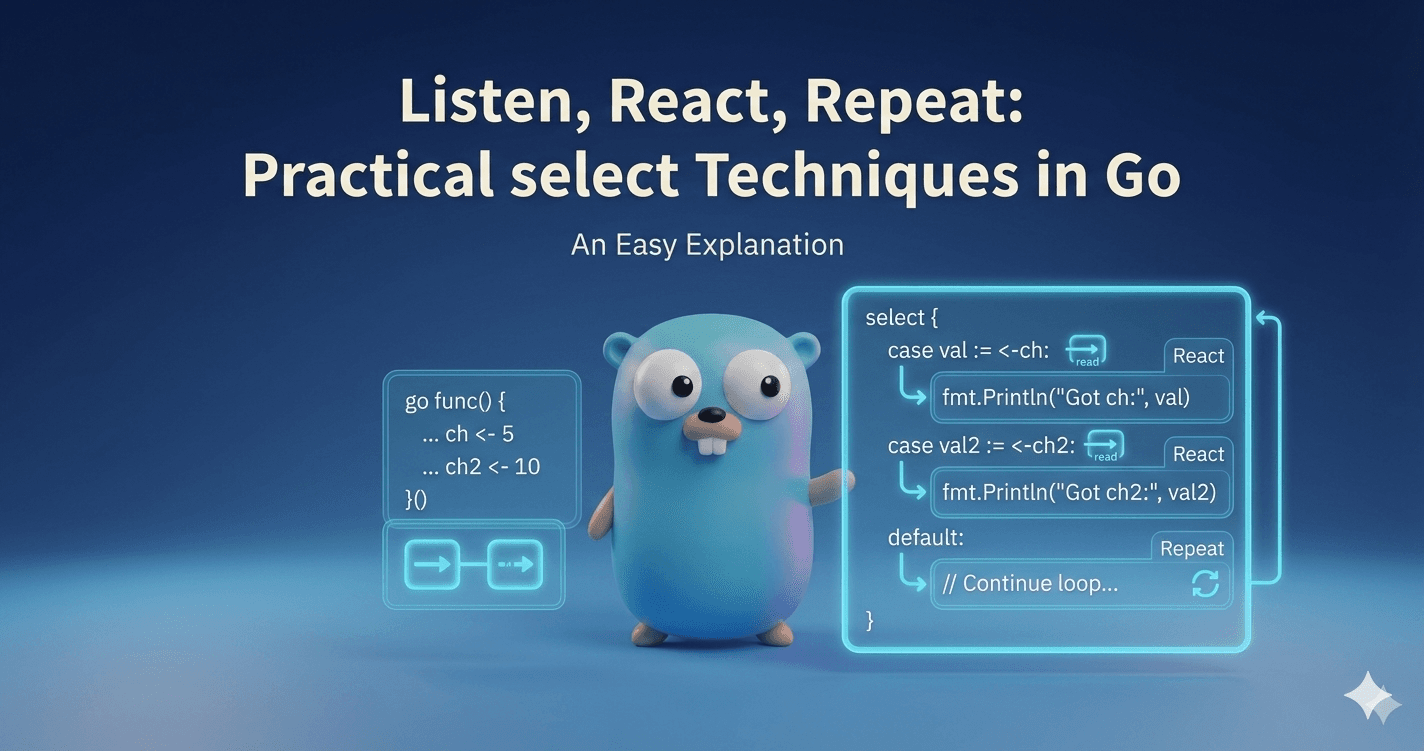

Select — handling multiple concurrent operations

WaitGroup & Mutex - essential sync tools

Real patterns like worker pools and pipelines.

This will complete your core understanding of Go’s concurrency model.